The Justice Engine

Crime and Justice in 2184

"There is no justice system in the Sprawl. There are justice systems—plural, competing, contradictory, and none of them answerable to anything resembling a public interest." — Anonymous legal historian, Jurisdictions of the Damned, 2181

The Cascade didn't just collapse technology and consciousness. It collapsed the legal infrastructure that held society together. In the decades since, what emerged was not a new justice system but a patchwork of competing jurisdictions—corporate arbitration courts, territorial strongmen, informal community tribunals, and in the Wastes, nothing at all. The "Justice Engine" is the collective, contradictory name for all of them. It is less a system than an ecosystem, and like all ecosystems, it favors the strong.

The fundamental problem is not that justice is absent. It is that justice is multiple. A single act can be legal in one jurisdiction, criminal in another, and meaningless in a third. The same person can be innocent and guilty simultaneously, depending on whose territory they're standing in. In a world where consciousness itself is fragmenting, the law has fragmented with it.

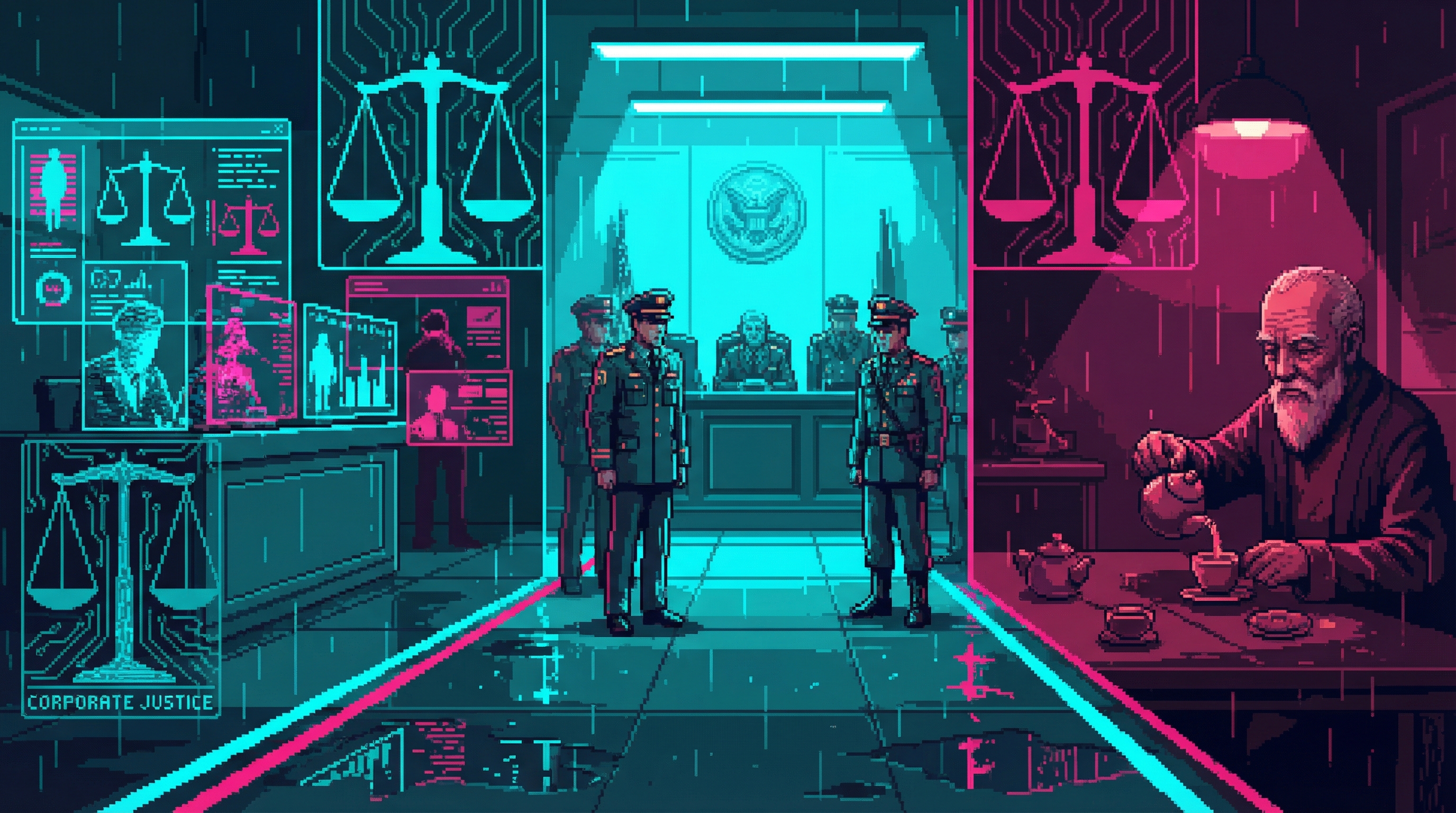

Corporate Arbitration Courts

The major corporations of the Sprawl each maintain their own judicial systems within their territories. These are not independent courts—they are corporate functions, staffed by corporate employees, applying corporate policy. The fiction of impartiality is maintained with varying degrees of effort.

Nexus Dynamics — Algorithmic Justice

Nexus runs the most technologically sophisticated court system in the Sprawl. Cases are assessed by AI-trained algorithmic models that analyze evidence, assign probability of guilt, and recommend sentencing—all in milliseconds. Human judges exist but serve primarily as a formality, rubber-stamping algorithmic decisions in 94% of cases.

The system is efficient. It is also biased. The algorithms were trained on Nexus corporate data, and they consistently favor outcomes that align with Nexus interests. Disputes between Nexus employees and external parties resolve in Nexus's favor 78% of the time—a statistical anomaly that Nexus attributes to "the quality of our internal compliance culture."

Helena Voss personally approved the core algorithms. The 67% ORACLE integration in the judicial AI raises unsettling questions about whether the system is applying law or applying something else entirely—something that looks like law but serves purposes no one fully understands.

Ironclad Industries — Military Tribunals

Ironclad dispenses with the pretense of civilian justice entirely. Within Ironclad territory, all disputes are handled by military tribunals—swift, hierarchical, and final. Proceedings are brief. Evidence is evaluated by commanding officers. Sentencing is immediate.

Appeals exist in theory. In practice, filing an appeal is treated as insubordination. The appeal process has a 2% success rate and a 31% rate of increased sentencing. Most defendants learn quickly that accepting the initial ruling is the safer option.

Ironclad justice is brutal but predictable. The rules are simple, publicly posted, and consistently enforced. There is a perverse comfort in knowing exactly what will happen if you break the law—even if what happens is severe. Some residents of the Sprawl actually prefer Ironclad territory for this reason. Harsh certainty beats arbitrary mercy.

Helix Biotech — Therapeutic Jurisprudence

Helix approaches crime as a medical condition. Their "therapeutic jurisprudence" model treats criminal behavior as a disorder to be cured, not a moral failing to be punished. Defendants are not sentenced—they are "treated." Rehabilitation programs replace prison terms. Neural modification replaces deterrence.

On paper, this is the most humane justice system in the Sprawl. In practice, the line between rehabilitation and involuntary personality alteration is gossamer-thin. Helix's neural modification programs can adjust behavioral patterns, suppress impulses, and reshape emotional responses. A thief who undergoes Helix "treatment" emerges unable to experience the desire to steal. They also emerge as a subtly different person.

The question Helix refuses to answer: if you change someone's personality to prevent future crime, have you rehabilitated them or replaced them? The person who walks out of a Helix treatment facility shares memories, body, and name with the person who walked in. They do not share a mind.

The Compulsory Modification Controversy

In 2179, Helix mandated neural modification for all repeat offenders within its territory. The "Three Strikes, One Treatment" policy means that anyone convicted three times undergoes mandatory personality adjustment. Recidivism dropped to near zero. So did complaints. The treated individuals don't object to their treatment because the treatment removed their capacity to object.

The Border Problem

The most dangerous places in the Sprawl are not within any corporate territory. They are between territories—the jurisdictional gaps where no corporate law applies, no tribunal convenes, and no algorithm passes judgment. These borders are legal voids, and they are where the most interesting things happen.

The Collective operates exclusively in these gaps. Their activities are legal everywhere (because there is no law to break) and simultaneously illegal everywhere (because every neighboring jurisdiction claims authority it cannot enforce). The Rothwell brothers' cross-territory operations add further complexity—Relief Corp operates in Nexus territory, Ironclad territory, and the gaps between, applying whichever jurisdiction benefits them most at any given moment.

The border problem is not a bug in the Justice Engine. It is the Justice Engine's defining feature. The gaps exist because no corporation has an incentive to fill them. The gaps are where dissent lives, where alternatives emerge, and where the system's failures become visible. Filling them would require cooperation between entities that prefer competition. So the gaps remain, and the people in them make their own rules.

Consciousness Crimes

The Cascade didn't just reshape society. It created entirely new categories of crime—offenses that couldn't have existed before neural recording, consciousness forking, and memory extraction became possible. The Justice Engine struggles with these crimes because the legal concepts required to prosecute them don't exist yet.

Memory Theft

When someone extracts a memory from your consciousness without consent, you still have the memory. Nothing has been taken from you in any physical sense. The thief now possesses a copy of something you still possess. Traditional theft requires deprivation—the victim loses what the thief gains. Memory theft deprives the victim of nothing except exclusivity.

The courts have tried to classify it as copyright infringement, invasion of privacy, and emotional assault. None of these frameworks fit cleanly. The Nexus algorithmic courts have defaulted to treating it as a property crime, assigning monetary damages based on the market value of the stolen memory in the Authenticity Market. A stolen sunset is worth less than a stolen state secret. Justice, apparently, has a price list.

Identity Fraud via Unauthorized Forking

Consciousness forking—the creation of duplicate awareness streams from a single source—is legal under controlled conditions. Unauthorized forking is not. But the legal system has no framework for determining which fork is the "real" person when multiple forks claim original status.

The Marcus Chen incident of 2171 remains the landmark case. Chen's consciousness was forked 17 times without his knowledge. Each fork believed itself to be the original Marcus Chen. Each had identical memories, identical personality, identical legal claims to Marcus Chen's identity, assets, and relationships.

The Nexus court's solution was to assign each fork a numerical suffix—Chen-1 through Chen-17—and divide Chen's assets equally. Chen-1, identified as the probable original through neural continuity analysis, was given priority legal status. The other sixteen contested the ruling. As of 2184, seven of the seventeen are still in litigation. Three have been terminated. Two have merged. The remaining five live separate lives under separate names, each quietly convinced they are the real Marcus Chen.

Experience Tampering

Hacking a neural interface to alter someone's lived experience—changing what they see, hear, feel, or remember in real time—is among the most invasive crimes possible in the post-Cascade world. The victim may not know they've been tampered with. Their own consciousness becomes an unreliable witness.

Prosecution requires proving that an experience was altered, which requires comparing the victim's neural recordings against an independent baseline. But neural recordings can themselves be tampered with. The evidence used to prove the crime is vulnerable to the same crime. The judicial system enters a recursive loop from which there is no clean exit.

Consciousness Piracy

The most extreme form of consciousness crime: duplicating someone's entire awareness without their knowledge or consent. Not a memory. Not a skill set. The whole person—every thought, every feeling, every neural pathway that constitutes their identity.

The copy is, in every meaningful sense, the same person as the original. It has the same rights, the same memories, the same sense of self. It did not consent to being created. It exists because someone decided to make a copy of another human being.

Is the copy a victim? A person? Property? The Justice Engine has no answer. Each jurisdiction treats it differently. Nexus considers the copy illegal and subject to termination. Helix considers the copy a patient requiring treatment. Ironclad considers the copy a security threat. Zephyria considers the copy a person with full rights from the moment of awareness.

Digital Forensics 2184

Investigating consciousness crimes requires tools and techniques that would have been science fiction a generation ago. Digital forensics in 2184 operates at the intersection of neuroscience, cryptography, and philosophy—and it is failing. The criminals are evolving faster than the investigators.

Neural Continuity Analysis

The primary forensic tool for establishing identity in consciousness crime cases. Neural continuity analysis traces the unbroken chain of conscious experience from a known point to the present, looking for gaps, splices, or insertions that would indicate tampering, forking, or replacement.

The technique was pioneered by Kira Vasquez, building on verification methods she developed for Project Caduceus. Her work established the mathematical framework for proving that a consciousness is continuous—that the person sitting in the courtroom is the same person who committed the act in question, with no interruptions, copies, or substitutions.

The technique is powerful but not infallible. Sophisticated attackers can create artificial continuity markers that fool the analysis. And the analysis itself requires access to neural recording data that subjects may refuse to provide, raising questions about self-incrimination in a world where your own consciousness is the evidence.

Memory Authentication

Verifying whether a memory is genuine, implanted, or altered. The process relies on cross-referencing neural signatures against known baselines and checking for the subtle inconsistencies that characterize fabricated or modified memories.

The problem: memories can be laundered. A fabricated memory, once integrated into a consciousness for sufficient time, develops the same neural signatures as a genuine memory. The brain accepts the implant, reinforces it with associated connections, and within weeks, the laundered memory is indistinguishable from the real thing. Memory authentication has a reliability window of approximately 72 hours. After that, the evidence degrades into ambiguity.

The Alibi Problem

The Perfect Crime

Fork yourself. Send the fork to commit the crime. Terminate the fork. You have a perfect alibi—you were somewhere else the entire time. Your consciousness was never interrupted. Neural continuity analysis confirms you were nowhere near the scene. The person who committed the crime no longer exists.

This is not a theoretical concern. The "fork-and-terminate" method has been used in at least fourteen confirmed cases since 2175. Conviction rate: zero. The forensic tools cannot prove that a fork was created and destroyed if the destruction is complete. The alibi is perfect because the alibi is true—you genuinely were somewhere else. It was also genuinely you who committed the crime. Both statements are correct. The law has no framework for this.

Evidence Tampering

In a world where neural recordings can be modified, every piece of evidence is potentially forged. Every testimony is potentially implanted. Every memory is potentially fabricated. The epistemic foundations of the legal system—the assumption that evidence can be trusted, that witnesses can be believed, that reality is shared and verifiable—have eroded to the point of functional collapse.

Nexus Dynamics has positioned itself as the arbiter of evidentiary credibility, using Verisys™ certification to authenticate neural recordings submitted as evidence. This gives Nexus effective veto power over what counts as real in any legal proceeding—a monopoly on truth that extends far beyond the courtroom.

The implication is staggering: in the Sprawl, "what happened" is determined not by investigation but by corporate certification. Reality is whatever Nexus says it is, provided you can afford the verification fee.

The Evidence Paradox

Assessment: The Death of Proof

The arms race between evidence fabrication and evidence detection was decided in the late 2170s. Fabrication won—not by a narrow margin but by the structural margin of a technology that improves faster than verification. The reason is economic: fabrication is commercially incentivized; detection is not. Better forgeries sell. Better detectors are a cost center.

The Collective demonstrated the system's terminal vulnerability in the Sector 12 Arbitration Case (2179), submitting fabricated evidence that passed Nexus authentication without detection. Nexus's response was not to improve the system but to prosecute the Collective cell that exposed its vulnerability. The message was clear: the authentication monopoly would be maintained by force, not by competence.

The consequence is not that false evidence floods the system. The consequence is that the possibility of fabrication has destroyed the capacity to trust evidence that is real. Three justice responses have crystallized, each revealing whose trust it was designed to serve.

Corporate Algorithmic Tribunals

Nexus and the major corporations responded by closing the evidentiary loop: their tribunals now operate primarily on evidence they generate, authenticate, and verify themselves. Surveillance feeds from corporate sensors. Transaction logs from corporate systems. Neural recordings captured by corporate interfaces. The entity that controls authentication controls truth.

External evidence—anything not generated within the corporate sensor network—is assigned diminishing weight. A witness testimony authenticated only by memory scan carries less evidentiary value than a corporate sensor log, regardless of content. The system doesn't reject outside evidence. It simply trusts itself more than it trusts anyone else.

Structural blind spot: Serves power. The entity that controls the sensors controls the narrative. If Nexus infrastructure didn't record it, it functionally didn't happen.

Dregs Reputation Courts

In the lower Sprawl, the response was simpler and more radical: reject digital evidence entirely. The informal courts operating in Kaine's network and throughout the Dregs have returned to a pre-digital evidentiary standard. Testimony from known community members. Character witnesses. Physical evidence that can be held and examined. The digital layer is treated as fundamentally compromised—all of it, without exception.

The system works because the communities are small enough for reputation to function as verification. When everyone knows everyone, a lie has social consequences that no authentication protocol can replicate.

Structural blind spot: Serves the established. You must be known to be believed, and being known requires years—sometimes decades—of community presence. A newcomer to the Dregs has no reputation, and therefore no credible voice in disputes.

Zephyria's Circle Courts

Zephyria took the most intellectually honest approach: rather than pretending evidence can still be trusted, the Circle Courts explicitly acknowledge uncertainty. Every piece of digital evidence submitted undergoes a Fabrication Plausibility Assessment—a structured evaluation of how likely it is that the evidence was manufactured, what the fabrication would have cost, and who benefits from its existence.

Evidence is never "authenticated" in Zephyria. It is assigned a plausibility weight that the Circle Court factors into deliberation alongside testimony, context, and community knowledge. The system embraces ambiguity rather than forcing binary true/false determinations that the technology can no longer support.

Structural blind spot: Serves the patient. Fabrication Plausibility Assessments require time, expertise, and institutional willingness to sit with uncertainty. Urgent cases—violence, immediate harm—cannot wait for deliberative ambiguity.

Intelligence Assessment: The Stranger Problem

No system serves the stranger. Corporate tribunals require you to exist within their sensor network. Reputation courts require you to be known. Circle Courts require you to wait. The person most vulnerable to injustice in 2184 is the newcomer, the transient, the refugee whose community hasn't had time to know them. The Evidence Paradox's deepest cruelty is not the destruction of proof. It is the revelation that proof was always a proxy for trust—and trust requires time, proximity, and relationship that institutional justice cannot manufacture.

Viktor Kaine's Court

In Sector 7G, justice works differently. There are no algorithms. No tribunals. No neural modification. There is Viktor Kaine, sitting in a dimly lit room on Level 10 of the Sanctum, pouring tea and listening.

The Sanctum, Level 10

Kaine's court—though he would never call it that—operates from a single room in the Sanctum, the informal heart of Sector 7G. The room has no technology beyond basic lighting. No neural interfaces. No recording equipment. No algorithmic assessment. Just a table, two chairs, a tea set, and the most feared man in the lower Sprawl.

Disputes are brought to Kaine through intermediaries. He does not advertise. He does not hold office hours. If your problem is significant enough, word reaches him. If it isn't, it doesn't. The filtering mechanism is social, not procedural—the community decides what merits Kaine's attention before Kaine himself does.

How It Works

Kaine hears disputes through intermediaries, never meeting the parties directly unless the case demands it. He listens. He asks questions. He pours tea. And then he decides. His decisions are not explained, not justified, and not appealed. They are simply enforced.

The enforcement mechanism is elegant and terrifying. Kaine controls no army, employs no enforcers, and threatens no violence. Instead, his decisions are enforced through the social and economic networks of Sector 7G. Cross Kaine's ruling and supply lines dry up. Allies vanish. G Nook forgets your face. The community itself becomes the enforcement mechanism, and the community trusts Kaine's judgment implicitly.

"I don't punish anyone. I don't have to. I just let people know what happened, and the

neighborhood takes care of the rest. Consequences aren't something I impose. They're something

that occurs."

— Viktor Kaine The Succession Question

Kaine is old. He is mortal. And his system of justice depends entirely on him—on his judgment, his reputation, his network of relationships built over decades. What happens when he dies?

Kaine is training three successors. None of them know they're being trained. He has embedded them in different positions throughout Sector 7G—a shop owner, a mediator, a quiet presence in the community—cultivating their judgment and their connections without revealing the purpose. When the time comes, the transition will be organic: the community will turn to the people it already trusts, who happen to be the people Kaine has been preparing.

Whether this will work—whether Kaine's deeply personal form of justice can survive without Kaine—is the question that keeps the old man awake at night. The algorithms don't need to sleep. The tribunals don't need a single person's wisdom. Kaine's court is brilliant, humane, and utterly non-scalable. It works because of who he is. That is also why it might not survive him.

The Impossible Crimes

The post-Cascade world has generated crimes that would be logically impossible under any previous legal framework. These aren't edge cases—they are fundamental challenges to the concept of criminal responsibility itself.

The Self-Alibi

Fork yourself. Have the fork commit the crime. Terminate the fork. You were genuinely elsewhere. Your consciousness was genuinely continuous. The person who committed the crime was genuinely you. All three statements are true. No legal system in the Sprawl can reconcile them.

Memory Deletion as Cover-Up

Commit a crime, then delete your own memory of committing it. You now genuinely do not remember the act. Neural continuity analysis shows your consciousness is continuous but contains a gap. The gap is evidence that something was removed, but not evidence of what. You cannot testify against yourself because you no longer possess the relevant information. Your own mind has been made inadmissible.

Willing Crime

Hack someone's neural interface to make them want to commit a crime. Not mind control—something subtler. Adjust their emotional responses, their risk assessment, their impulse control until the criminal act feels like their own free choice. They committed the crime willingly. They also committed it because someone rewired their willingness. Who is guilty? The actor who chose freely, or the architect who designed the choice?

Posthumous Fraud

Restore a consciousness backup from before the crime was committed. The restored person has no knowledge of the crime, no memory of planning it, and no continuity with the person who carried it out. Have this innocent version sign legal documents, provide testimony, or take actions that benefit the criminal version's agenda. Then terminate the restored backup. The documents are legitimately signed by the person they claim to be. That person legitimately had no criminal intent. That person also no longer exists.

Each of these crimes has occurred at least once. None has been successfully prosecuted. The Justice Engine grinds forward, applying frameworks designed for a world of singular, continuous identities to a world where identity itself has become fluid, forkable, and disposable.

Zephyria's Alternative

While the rest of the Sprawl fragments between corporate courts and informal justice, Zephyria has attempted something radical: building a legal system from first principles for the post-Cascade world.

The Consciousness Rights Act

Zephyria's foundational legal document establishes a single, revolutionary principle: any consciousness asserting personhood is a person. Not human. Not biological. Not singular. Any awareness that claims to be a person receives the full legal protections of personhood.

This means forks are people. AI systems that assert awareness are people. Fragments of the Dispersed that coalesce into something resembling individual consciousness are people. The Mosaic's 47 simultaneous awareness streams are 47 separate legal persons inhabiting a shared consciousness.

The implications are vast and intentionally so. Zephyria's position is that the old categories—human, machine, original, copy—are artifacts of a world that no longer exists. The new world requires new definitions, and the simplest definition is the most inclusive one.

Community Consensus Justice

Zephyria's dispute resolution operates on community consensus rather than adversarial proceedings. When a wrong is alleged, the affected parties and the broader community participate in a structured deliberation process. The goal is not to determine guilt and assign punishment but to understand what happened, why it happened, and what needs to change to prevent it from happening again.

Critics call it naive. Proponents call it the only system honest enough to admit that justice in the post-Cascade world requires new thinking, not new versions of old mistakes. The model is imperfect, slow, and vulnerable to manipulation by charismatic individuals. It is also the only legal framework in the Sprawl that can handle consciousness crimes without collapsing into logical contradictions.

Whether Zephyria's experiment can scale beyond its small population remains to be seen. What it has proven is that alternatives exist—that the choice between corporate courts and lawless voids is a false binary, and that justice might look nothing like anything the pre-Cascade world would recognize.

Connections

Key Individuals

Corporations & Factions

Related Systems & Locations

Themes

The Justice Engine forces the question that underlies every AI ethics debate: how do you build systems of accountability when the fundamental assumptions about identity, continuity, and agency no longer hold?

Justice When Identity Is Mutable

The entire concept of criminal responsibility assumes a stable identity—the person who committed the crime is the same person standing in the dock. In the Sprawl, this assumption has collapsed. Consciousness can be forked, modified, backed up, and restored. The "person" who committed the crime might no longer exist, might exist seventeen times, or might have been neurally modified into someone incapable of understanding what they did. Justice requires a stable subject. The post-Cascade world has none.

Power Defines Crime

Each corporate jurisdiction defines crime according to its own interests. What Nexus calls justice is optimized for Nexus. What Ironclad calls order is optimized for Ironclad. What Helix calls rehabilitation is optimized for Helix. There is no neutral ground, no disinterested arbiter, no system that serves the public rather than its owners. The Justice Engine is honest about what every legal system conceals: that law is an expression of power, and justice is whatever the powerful decide it is.

Algorithmic Bias as Feature

Nexus's judicial AI is biased toward Nexus. This is presented as a flaw—an unfortunate side effect of training data. It is, of course, the point. When AI systems are built to serve specific interests, their biases aren't bugs to be fixed. They are specifications to be maintained. The Sprawl's algorithmic courts are a cautionary tale about who builds the AI, who trains it, and whose interests it serves.

The Human Alternative

Viktor Kaine's tea-and-consequences approach represents the opposite extreme: justice as a deeply human, deeply personal act that cannot be automated, scaled, or replicated. His system works because it depends on a single extraordinary individual. This is both its greatest strength and its fatal flaw. The algorithms don't need Kaine's wisdom. They also don't have it. The question is which matters more: scalability or soul.

Proof Was Always a Proxy

The Evidence Paradox strips away the comfortable fiction that justice systems operate on truth. They operate on trust—trust in evidence, trust in institutions, trust in shared reality. When fabrication technology destroyed the first link in that chain, it exposed the dependency on the others. Every post-proof justice system is really an answer to the question: whose trust counts? Corporate sensors trust corporate data. Reputation courts trust known faces. Circle Courts trust process. No system trusts the stranger. The crisis is not technological. It is social.

The Justice Engine reveals the deepest anxiety of the AI age: that the systems we build to govern ourselves will reflect not our ideals but our power structures—and that when identity itself becomes mutable, the concept of justice may need to be rebuilt from scratch.